UTILIZING MACHINE VISION FOR MANUFACTURING DEFECT DETECTION AND PREVENTION

With Industry 4.0 on the rise, manufacturers face increasing pressure to improve the speed and accuracy of their production lines.

Manufacturing defects can become a heavy financial burden. According to the American Society of Quality, many organizations expend 15–20% of sales revenue on quality-related costs, with some spending as much as 40% of total operations. Detecting defects before products go out the door — and going a step further to prevent them before they happen — reduces scrap and rework, saving time and improving a manufacturer’s bottom line.

Rather than relying on human eyes alone, manufacturers are turning to machine vision solutions comprising software and hardware elements to perform visual inspections of products. Machine vision removes subjectivity from the inspection process, provides more consistent results, and removes the risk of human errors.

Applications

HOW MACHINE VISION PERFORMS DEFECT DETECTION FOR QUALITY CONTROL

Machine vision allows software to receive visual input from cameras, analyze what it’s seeing, and act on that information. In a machine vision solution for defect detection, industrial cameras capture images as products come down the line. Defect detection software scans the images for product defects, flags anomalies, triggers a reject mechanism to kick it off the line, and sends alerts to managers on the floor. Unlike human inspectors, machine vision cameras capture and retain data that, over time, can inform manufacturers of issues in their production process that lead to defects — allowing them to pursue prevention techniques.

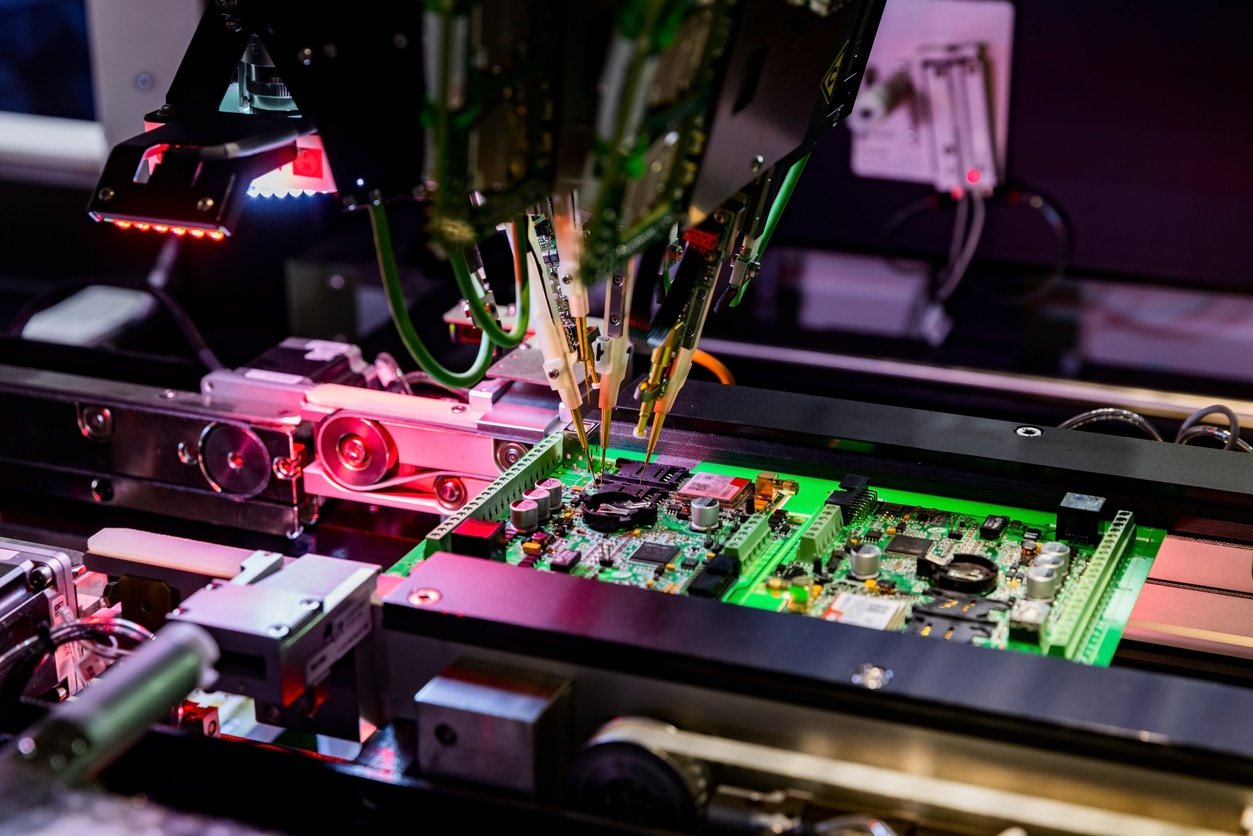

Fig 1: Machine vision cameras can help identify defects and ensure proper assembly of electronic circuit boards.

While technology is evolving quickly, there are factors that make machine vision an excellent choice for some quality control applications, while not for others. Machine vision solutions used with high-speed cameras can inspect many parts per second if looking for specific details, but the software could struggle with unexpected anomalies such as changes in part appearance due to contamination, orientation, or occlusion. For example, in a semiconductor fabrication plant, a machine vision defect detection solution might grade the quality of each silicon wafer, knocking items off the line if they are cracked or defective.

While there are variations between solutions on the market, most follow a pattern for getting them up and running and improving results over time.

- Feature extraction: First, a manufacturer presents the software with a template to check for defects. It often comes in the form of an image with particular factors highlighted so that when anomalies appear, they will be flagged as defects. For example, if an automotive valve spring is bent, the machine vision solution sends an alert and a robotic arm removes it from the line.

- Training the model: As implementation begins, human managers feed labeled images to the software to deepen its knowledge of the template. The images are classified as good parts or bad parts, based on whether a specific defect appears.

- Testing: After rigorous trials and frequent corrections to make sure the defect detection is accurate, the solution is ready to operate without constant human oversight.

Because machine vision is able to quickly catalog patterns in defects, which offers an advantage over human inspectors, the system can help establish the root causes of the defects. Applying manufacturing analytics in real-time, the software can mark exactly which variable was incorrect — such as a misaligned product label — and also note when and how often the defect occurs, allowing managers to identify root causes.

MACHINE VISION USE CASES FOR IDENTIFYING MANUFACTURING DEFECTS

A wide range of potential applications exist for machine vision defect detection and prevention in manufacturing facilities. A few examples include:

- Manufacturing plants: High-resolution machine vision cameras can detect even the smallest abnormalities in products. That level of detailed inspection is particularly important when the product is a medical device or car engine component, in which any defect could be dangerous.

- Food production: In the food production process, machine vision can spot mislabeled packaging, read text and barcodes on labels, and monitor amounts of added ingredients, such as seasoning on potato chips. By providing documentation and data about such details as labels, ingredients, and safety seals, machine vision solutions help food manufacturers meet stringent industry standards and regulations.

- Transportation: Using machine vision technology, vehicle manufacturers can monitor assembly processes and spot defects in real-time. For example, software might flag a flaw in the surface of a car tire and notify a human or robot to take it off the line. Using automated systems to identify and remove defective parts aids in regulatory compliance because it supplies a stream of recorded data.

Medical industry: Products made for hospitals, doctors, and patients need to be carefully inspected to ensure they are correctly sealed and labeled. Medication errors impact more than seven million patients in the U.S. every year, and machine vision solutions could reduce that number by catching defects that human inspectors cannot see.

GPUDIRECT: ZERO-DATA-LOSS IMAGING

Emergent leverages the power and reliability of GigE Vision and the widely accessible Ethernet infrastructure to ensure robust and reliable data acquisition and transmission, delivering exceptional performance. By utilizing an optimized GigE Vision setup and by supporting technologies like NVIDIA’s GPUDirect for direct image transfer to GPU memory, Emergent removes the burden on the system’s CPU and memory when handling substantial data transfers. Instead, it harnesses the power of GPUs for data processing tasks while maintaining compatibility with the GigE Vision standard and interoperability with compliant software and peripherals.

PATTERN MATCHING

The below video shows how easily one can create and prototype an algorithm to perform high quality pattern matching while only writing your custom GPU Cuda code.

INFERENCE

The below video shows how easily one can add and test their own trained inference model to perform detection and classification of arbitrary objects. Simply train your model with PyTorch or TensorFlow and add this to your own eCapture Pro plug-in. Then instantiate the plug-in, connect to your desired camera and click run—it does not get easier than this.

With well trained models, inference applications can be developed and deployed with many Emergent cameras on a single PC with a couple of GPUs using Emergent’s GPU Direct functionality – nobody does performance applications like Emergent.

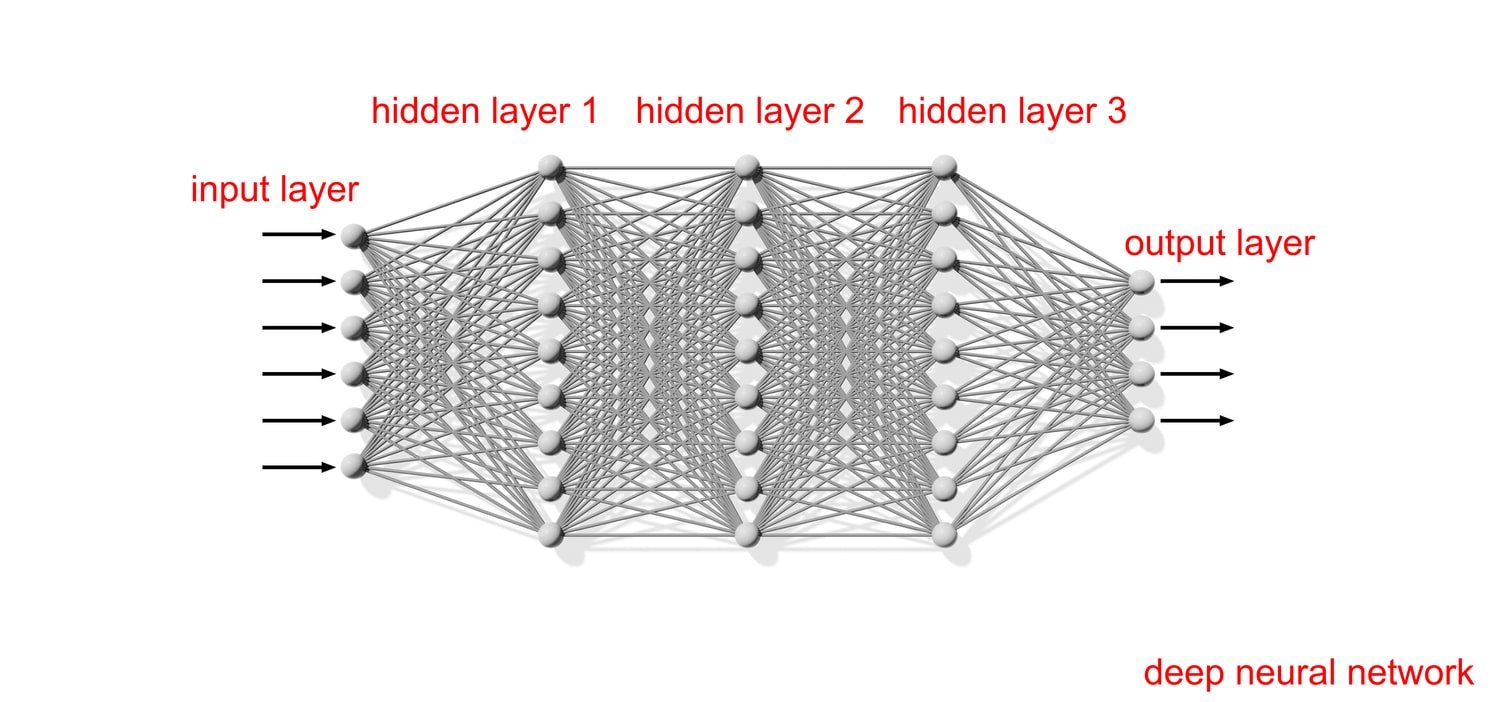

Fig 2: Modeled after the human brain, neural networks are a subset of machine learning at the heart of deep learning algorithms that allow a computer to learn to perform specific tasks based on training examples.

POLARIZATION

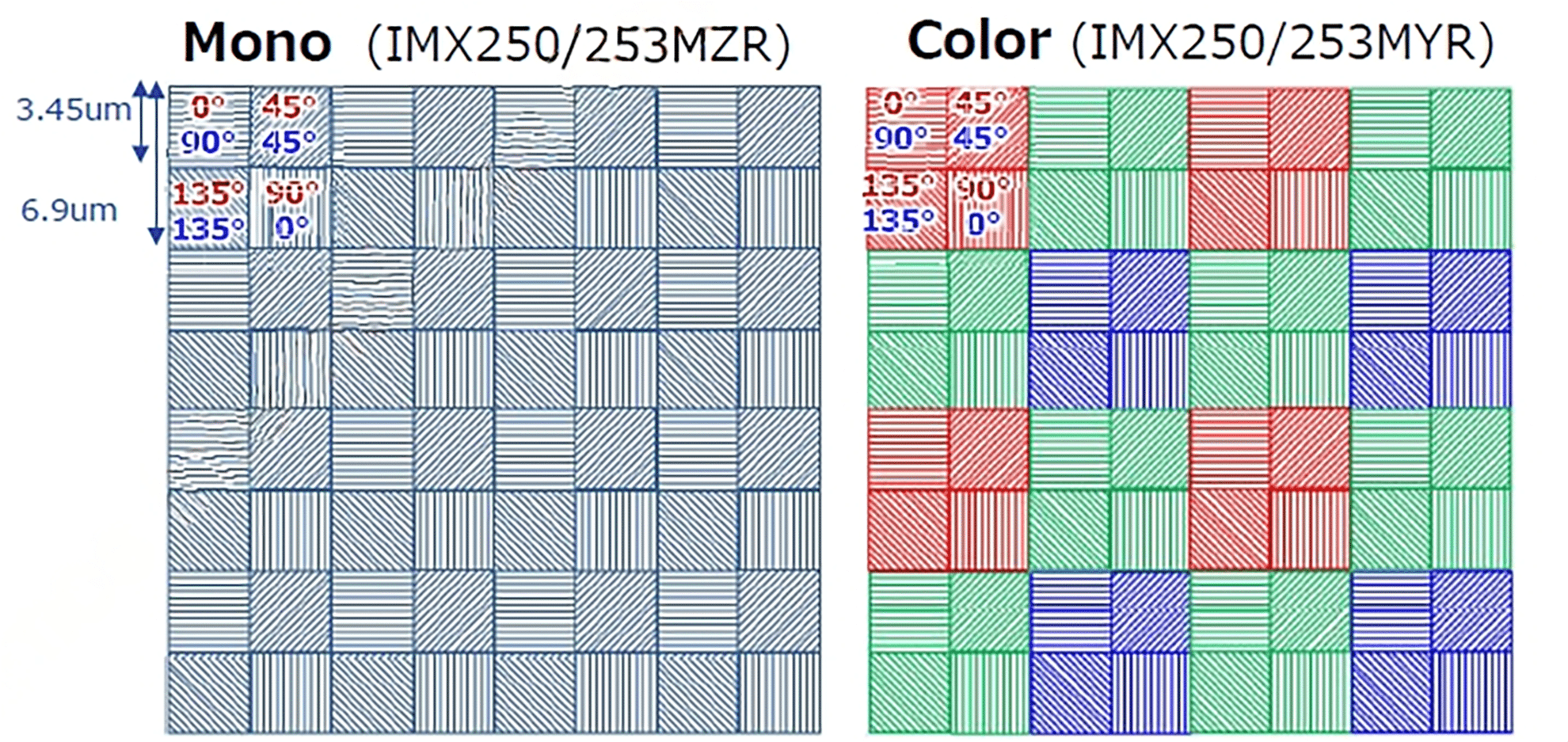

Fig 3: In Sony PolarSens CMOS polarized sensors, tiny wire-grid polarizers over every lens have 0°, 45°, 90°, and 135° polarization angles in four-pixel groups.

EMERGENT GIGE VISION CAMERAS FOR DEFECT DETECTION APPLICATIONS

| Model | Chroma | Resolution | Frame Rate | Interface | Sensor Name | Pixel Size | |

|---|---|---|---|---|---|---|---|

|

HR-5000-S-M | Mono | 5MP | 163fps | 10GigE SFP+ | Sony IMX250LLR | 3.45×3.45µm |

|

HR-5000-S-C | Color | 5MP | 163fps | 10GigE SFP+ | Sony IMX250LLR | 3.45×3.45µm |

|

HB-12000-SB-M | Mono | 12.4MP | 100fps | 10GigE SFP+ | Sony IMX535 | 2.74×2.74μm |

|

HB-12000-SB-C | Color | 12.4MP | 100fps | 10GigE SFP+ | Sony IMX535 | 2.74×2.74μm |

|

HB-25000-SB-M | Mono | 24.47MP | 98fps | 25GigE SFP28 | Sony S IMX530 | 2.74×2.74μm |

|

HB-25000-SB-C | Color | 24.47MP | 98fps | 25GigE SFP28 | Sony S IMX530 | 2.74×2.74μm |

|

HZ-10000-G-M | Mono | 10MP | 1000fps | 100GigE QSFP28 | Gpixel GSPRINT4510 | 4.5×4.5μm |

|

HZ-10000-G-C | Color | 10MP | 1000fps | 100GigE QSFP28 | Gpixel GSPRINT4510 | 4.5×4.5μm |

For additional camera options, check out our interactive system designer tool.